In a world characterized by rapid technological advancements and constant change, the ability to ask meaningful questions is emerging as a crucial survival skill.

In a previous substack I wrote about how existential intelligence is a critical survival skill. Like existential intelligence, inquistiveness is a trait that is fundamentally difficult to automate because it requires a degree of self-reflection, introspection, and out-of-the-box thinking.

Inquisitiveness or “the art of asking questions”, is a fundamental aspect of human cognition that drives learning, innovation, and personal growth.

As we navigate the complexities of the 21st century, cultivating this skill becomes essential for both individuals and organizations. Above all, inquisitiveness needs to be cultivated within the classroom for younger generations, especially as they increasingly rely on AI tools to write content, conduct research, and parse and make sense of data.

The Importance of Inquisitiveness

From a young age, humans exhibit a natural curiosity about the world around them. This innate inquisitiveness propels us to explore, discover, and understand our environment. However, as we grow older, societal and educational structures often discourage questioning, favoring rote memorization and standardized answers instead. This shift stifles our natural curiosity and limits our potential for growth and innovation.

Inquisitiveness is not just about asking questions; it’s about asking the right questions.

It involves a willingness to challenge assumptions, seek deeper understanding, and explore new possibilities.

We live in the information age, meaning that information is abundant but wisdom is scarce. In such a context, the ability to ask insightful questions is what will set individuals and organizations apart.

AI and the Limitations of Inquisitiveness

AI falls short in the realm of inquisitiveness. GenAI models like ChatGPT can provide answers based on their training data, but they lack the ability to genuinely question, probe, and explore in a human-like manner.

AI operates within the boundaries of its programming and training data. It can identify patterns, generate responses, and even simulate a form of curiosity by asking questions that lead to predefined goals. However, it does not possess the intrinsic motivation or the cognitive flexibility to engage in open-ended, exploratory questioning that characterizes true inquisitiveness.

Let’s use GPT-4 as an example.

GPT-4 can simulate curiosity by generating questions that appear to seek deeper understanding, and its ability to recognize patterns in vast amounts of data enables it to ask questions that connect disparate pieces of information.

This adaptive capability allows GPT-4 to engage in dynamic and interactive dialogues, often making it seem as though it is actively seeking to understand the user’s needs and interests. However, true inquisitiveness is driven by an intrinsic desire to learn and explore, which GPT-4 inherently lacks.

Its questions are generated based on pre-existing patterns in its training data and the immediate context of the conversation, rather than from a genuine desire to understand or discover new information.

Operating within the confines of its programming, GPT-4 cannot engage in truly open-ended exploration and lacks cognitive flexibility — the ability to shift thinking and approach problems from multiple angles. Furthermore, human inquisitiveness is shaped by personal experiences and emotions, elements that GPT-4 cannot replicate. Its responses are generated impersonally, devoid of the depth that comes from human lived experience.

Machine Learning and Inquisitiveness

Machine learning (ML), which encompasses different types of learning such as supervised, unsupervised, and reinforcement learning, showcases some elements that might appear inquisitive.

In supervised learning, models learn from labeled data to make predictions.

Unsupervised learning involves finding hidden patterns in unlabeled data, exhibiting a semblance of curiosity by discovering structures without explicit guidance.

Reinforcement learning is perhaps the closest to inquisitiveness, where agents learn by interacting with their environment, exploring different strategies to maximize rewards.

Despite these capabilities, ML models lack true inquisitiveness, as their exploration is confined to optimizing predefined objectives rather than an intrinsic desire to understand or discover new knowledge independently. I’ll go into these details towards the end of this piece, but first let’s explore the role of incorporating inquisitiveness in K-12 education.

The Role of Inquisitiveness in Innovation

Inquisitiveness is the bedrock of innovation. It drives us to look beyond the obvious, to question the status quo, and to seek novel solutions to complex problems.

Consider the example of entrepreneurs who revolutionize industries by asking fundamental questions that others overlook. Companies like Airbnb, Uber, and Netflix disrupted traditional business models by questioning established norms and envisioning new ways to meet consumer needs. In fact, one of the examples we often cite at our workshops at AIDEN is the Kodak Moment, which is defined by when Kodak missed a disruptor by focusing on their bottom-line business rather than innovating. We all know what happened to Kodak — over time, they lost nearly all of their market share and become irrelevant in the new era of digital photography.

Kodak’s downfall is a classic example of how a lack of inquisitiveness and failure to ask the right questions can lead to disruption. One solution to avoid encountering a Kodak Moment is to engage in the systems way of thinking which encourages people to think about all of the various micro and macro trends that might affect an organization, and question the extent of their impact.

By engaging in a systems view workshop and asking the right questions, Kodak could have anticipated the digital revolution and positioned itself as a leader in the evolving photography industry. .

Nurturing Inquisitiveness in K-12 Education

The education system plays a pivotal role in shaping the inquisitiveness of future generations. Unfortunately, many traditional educational models prioritize rote learning and standardized testing over critical thinking and questioning. To prepare students for the challenges of the future, we need to incorporate multidisciplinary, curiosity-driven learning within existing educational hubs.

Educators can foster inquisitiveness by creating learning environments that encourage exploration, experimentation, and dialogue.

Instead of providing ready-made answers, teachers can guide students to formulate their own questions, investigate multiple perspectives, and develop a deeper understanding of the subjects they study. Project-based learning, inquiry-based approaches, and interdisciplinary studies are effective strategies to cultivate inquisitiveness.

The Challenge of AI-Assisted Cheating

Increasingly, students are using GPT and other AI tools to cheat and write content, which is making parents and educators globally pretty anxious.

A recent study, “Impact Analysis National Schools Tech Tracker, May 2024,” highlights the growing prevalence of AI usage in K-12 education, and explores parent, teacher and student sentiment towards Chatbots. The study found that 6–12 graders are using these tools much more than last year (~70%) — and there’s no doubt that schools globally are fearful of AI-assisted cheating on school assignments and exams.

Mitigating AI-Assisted Cheating

To address this issue while promoting inquisitiveness, educators can implement several strategies:

Emphasize the Learning Process Over the Final Product: Shift the focus from the final product to the learning process itself. Encourage students to document their thought processes, drafts, and revisions. This approach not only makes it harder to outsource work to AI but also promotes deeper engagement with the material.

Incorporate AI Literacy: Teach students about AI, including its benefits and limitations. By understanding how AI works, students can better appreciate the value of human creativity and critical thinking. This knowledge can also help them use AI responsibly and ethically.

Develop Original and Personalized Assignments: Create assignments that require personal reflection, unique perspectives, and original research. Personalized tasks that relate to students’ experiences and interests are less likely to be effectively completed by AI.

Foster a Culture of Academic Integrity: Cultivate an environment where academic honesty is valued and upheld. Discuss the importance of original work and the consequences of cheating. Encourage students to take pride in their own efforts and achievements.

Use AI as a Learning Tool: Integrate AI tools into the learning process as aids rather than shortcuts. For example, use AI to generate ideas, provide feedback, or simulate scenarios, but ensure that students still engage in critical thinking and problem-solving.

A granular look at how AI falls short on inquisitiveness

Despite AI’s impressive advancements, it falls short when it comes to true inquisitiveness. AI models, including the most advanced Generative Pre-trained Transformers (GPT), are designed to process and analyze vast amounts of data, identify patterns, and generate responses. However, they lack several key attributes that are intrinsic to human inquisitiveness:

Contextual and Temporal Integration

AI models like GPT-4 have made significant advancements in their ability to process information, with the latest versions, such as GPT-4 Turbo, with a context window of up to 128,000 tokens. This is a substantial increase from previous iterations, which had much smaller context windows. For example, GPT-3.5 started with a context window of 4,000 tokens, demonstrating a 32-fold improvement in just a year (OpenAI Help Center).

Despite this progress, there are still inherent limitations. While the 128,000-token context window allows GPT-4 to handle large amounts of information in a single prompt (equivalent to about 300 pages of text), it’s not sufficient for truly integrating extensive, temporally distant information.

Human inquisitiveness involves drawing connections across various contexts and long periods, a feat AI struggles with due to its fixed context window and the computational complexity involved in processing such large datasets continuously. Empirical studies show that as the context length approaches its maximum limit, the model’s performance in maintaining coherence and recalling earlier information degrades, often leading to hallucinations or missing important details.

Lack of Intrinsic Motivation

Inquisitiveness in humans is driven by intrinsic motivation — a natural desire to learn, explore, and understand.

Neuroscientific research has shown that intrinsic motivation activates brain regions associated with reward and learning, encouraging individuals to seek out challenges and novel experiences without external incentives (Frontiers) (MDPI).

In contrast, AI lacks this intrinsic drive and operates solely based on external instructions and programmed goals. Without an intrinsic desire to know or understand, AI cannot initiate the kind of exploratory questioning that characterizes human curiosity. This fundamental difference highlights why AI, despite its advanced capabilities, cannot replicate the depth of human inquisitiveness (American Psychological Association).

Absence of Emotional and Experiential Learning

Human inquisitiveness is often fueled by emotional and experiential learning. Our personal experiences and emotional responses to those experiences shape the questions we ask and the knowledge we seek. AI, devoid of emotions and personal experiences, lacks this dimension of inquisitiveness. It cannot reflect on past experiences to form new questions or pursue knowledge driven by emotional engagement.

Rigidity in Problem-Solving Approaches

AI approaches problem-solving based on pre-existing data and learned patterns. In contrast, human inquisitiveness often leads to novel problem-solving methods, driven by the desire to explore uncharted territories. This rigidity in AI’s approach limits its ability to think creatively and ask questions that might lead to unconventional solutions.

Dependence on Training Data

AI’s capacity to ask questions is inherently limited by the data it has been trained on. If certain concepts or areas of knowledge are not well-represented in its training data, AI will struggle to generate meaningful questions about those topics. Humans, however, can question beyond the scope of their current knowledge, driven by curiosity about the unknown.

AlphaGo and the Limits of AI Inquisitiveness

I often tell people that the release of AlphaGo was a eureka moment for me when it came to AI development - the fact that we had a system that could demonstrate original thinking was truly awe-inspiring, even more so than everything I saw ChatGPT do many years later.

The development of AlphaGo, the AI program that defeated the world champion Go player, is a prime example of AI’s strengths and limitations.

AlphaGo’s success was built on its ability to analyze vast amounts of game data and simulate numerous potential moves, leveraging deep neural networks and reinforcement learning. However, its achievements also highlight key limitations in AI’s inquisitiveness.

Mastery Through Simulation, Not Curiosity

Despite how incredible and impressive AlphaGo was/is, it’s important to note a few things.

AlphaGo mastered Go through extensive training and simulation, playing millions of games against itself to improve its strategies. This approach contrasts sharply with human learning, where curiosity and questioning play crucial roles. Human Go players often explore “why” a particular strategy works, seeking to understand underlying principles, whereas AlphaGo’s improvement was driven by optimizing outcomes based on its training, not by an intrinsic desire to understand.

Lack of Understanding Beyond Gameplay

AlphaGo excels within the well-defined parameters of the game of Go, but it does not possess the ability to ask broader questions about the game’s philosophy, cultural significance, or the psychological aspects of human opponents. Its focus is purely on gameplay optimization, lacking the inquisitive nature that drives humans to explore the wider context and implications of their activities.

While AlphaGo made moves that surprised even seasoned players, these innovations were within the scope of its training data and simulations. Human inquisitiveness, on the other hand, can lead to entirely new ways of thinking and playing that go beyond existing patterns. This capacity for true innovation, driven by curiosity and the desire to explore the unknown, is something AI has yet to achieve.

The Role of Inquisitiveness in AI Prompting

Inquisitiveness is not only a human strength but also a critical skill when interacting with AI.

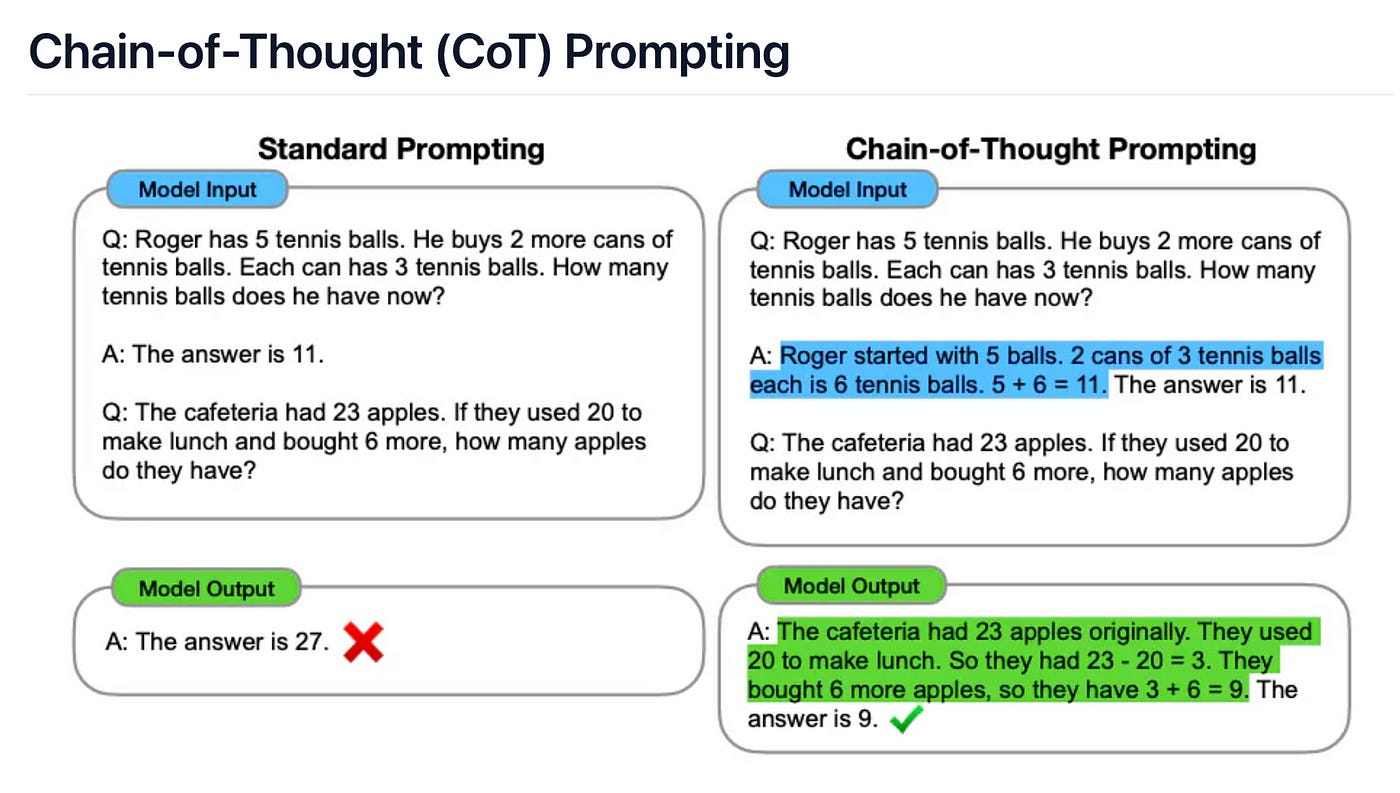

The effectiveness of AI tools, particularly in generating useful and relevant responses, heavily relies on the quality of the questions posed. Recent research on chain-of-thought prompting in advanced GPT models highlights this point. Chain-of-thought prompting involves guiding AI through a step-by-step reasoning process by asking a series of linked questions. This technique significantly enhances the model’s performance in complex tasks by mimicking the human way of breaking down problems and exploring them sequentially.

For instance, a study published by researchers at Google demonstrated that chain-of-thought prompting can improve the model’s ability to solve math word problems, perform logical reasoning, and handle multi-step tasks.

This approach leverages the model’s existing capabilities but requires human-like inquisitiveness to formulate the right sequence of prompts that lead to accurate and meaningful outcomes.

This intersection of human inquisitiveness and AI’s computational power underscores the importance of asking the right questions. Effective AI use hinges on our ability to prompt it in ways that stimulate deeper analysis and nuanced responses. Therefore, cultivating inquisitiveness is not only essential for personal and professional growth but also for maximizing the potential of AI technologies.

The Future of Human+AI Collaboration

The development of AlphaGo and its groundbreaking achievements provide a compelling case study for the future of human+AI collaboration. While AI like AlphaGo excels in data analysis and strategic optimization within defined parameters, it lacks the intrinsic inquisitiveness, emotional understanding, and contextual awareness that are hallmarks of human intelligence. By combining the strengths of both humans and AI, we can envision new roles and skills that will redefine the future of work and innovation.

Strategic AI Coach

One potential future role is that of a Strategic AI Coach. In this role, humans would collaborate with AI systems to enhance strategic decision-making in various fields, such as business, sports, and even personal development. Drawing inspiration from AlphaGo’s success in mastering Go, a Strategic AI Coach could leverage AI’s ability to analyze vast amounts of data and simulate outcomes while relying on human intuition, creativity, and contextual understanding to devise innovative strategies.

For instance, in business, a Strategic AI Coach could help executives navigate complex market dynamics by providing data-driven insights and predictive models. The human coach would then interpret these insights, ask probing questions, and apply their knowledge of industry trends and organizational culture to develop actionable strategies that align with the company’s goals and values.

AI-Augmented Researcher

Another promising role is that of an AI-Augmented Researcher. This role would involve researchers using AI tools to enhance their investigative processes. While AI can quickly sift through large datasets, identify patterns, and generate hypotheses, human researchers would provide the inquisitiveness and critical thinking needed to formulate meaningful research questions and design experiments.

For example, in medical research, AI could analyze genetic data to identify potential biomarkers for diseases. The AI-Augmented Researcher would then explore these findings, ask questions about their biological significance, and design follow-up studies to validate and expand on the AI’s discoveries. This combination of AI’s data processing capabilities and human inquisitiveness could accelerate breakthroughs in various scientific fields.

Human+AI Innovation Teams

We can also envision the rise of Human+AI Innovation Teams within organizations. These teams would consist of humans and AI working together to drive innovation and solve complex problems. AI would handle the heavy lifting of data analysis, trend identification, and scenario simulation, while human team members would focus on creative thinking, ethical considerations, and long-term vision.

In product development, for instance, an AI could analyze customer feedback and market trends to identify unmet needs and opportunities. Human innovators would then use these insights to brainstorm new product ideas, ask critical questions about user experience and ethical implications, and guide the development process to ensure the final product aligns with both market demands and societal values.

AI-Empowered Educators

Finally, the role of AI-Empowered Educators offers a glimpse into the future of education. Educators could use AI to personalize learning experiences, track student progress, and identify areas where students need additional support. The AI would provide data-driven insights, while educators would apply their inquisitiveness to understand each student’s unique needs, ask questions to guide learning, and foster a supportive and engaging educational environment.

For example, an AI might identify that a student struggles with a particular math concept based on their performance data. The educator would then explore why the student is having difficulty, ask questions to uncover underlying issues, and tailor their teaching approach to address the student’s specific needs. This human+AI partnership could lead to more effective and personalized education, helping students achieve their full potential.

Ultimately, as I often teach people, the world’s best chess player today is not human or AI — it’s centaur chess. Our best bet at navigating skills and job uncertainty, as

astutely points out, is to always invite AI to the table.